Manual marking - using rubrics and scorecards

DRAFT DOCUMENTATION

If you want to mark student work manually instead of (or as well as) relying on automarking, you can add a rubric to a problem, which will allow you to create scorecards for each student (i.e. mark them against the rubric).

How to set up a rubric

When you're in the problem authoring interface, click 'Show manual marking rubric configuration', then select the Rubric tab:

Now you'll be able to describe the rubric (markdown), and add categories and items to the rubric in a similar way to defining the automated tests. The total available points for a rubric are calculated automatically from the maximum points available for an item (see Maximum Points). If a category is a 'bonus' category, this is excluded from the maximum points calculation, and this means points from this category cannot cause the maximum points to be exceeded. You can drag categories and items to change their ordering. For more details on how rubrics and scorecards are modelled, see the 'Model of rubrics and rubric scorecards' section below.

Now you'll be able to describe the rubric (markdown), and add categories and items to the rubric in a similar way to defining the automated tests. The total available points for a rubric are calculated automatically from the maximum points available for an item (see Maximum Points). If a category is a 'bonus' category, this is excluded from the maximum points calculation, and this means points from this category cannot cause the maximum points to be exceeded. You can drag categories and items to change their ordering. For more details on how rubrics and scorecards are modelled, see the 'Model of rubrics and rubric scorecards' section below.

If you want to copy a rubric from one problem to another, use the 'import/export' buttons at the top to save the rubric to disk and then load the rubric in on a different problem.

How to mark/view submissions via the marking dashboard

Usually, markers will want to mark via the marking dashboard, as this will lead them directly to the on time passing submissions (based on the course end date). The marking dashboard is accessible from the course page for coordinators and tutors:

Before any submissions are displayed, you probably want to narrow them down to particular problems/groups, then hit 'Search'. You can choose to show only unmarked submissions, but the results here are not automatically refreshed, so you will need to repeat your search as marking progresses, particularly if multiple people are marking.

Before any submissions are displayed, you probably want to narrow them down to particular problems/groups, then hit 'Search'. You can choose to show only unmarked submissions, but the results here are not automatically refreshed, so you will need to repeat your search as marking progresses, particularly if multiple people are marking.

Only one submission is linked to per user per problem, no matter how many submissions they have. This is the 'most markable' submission, defined as the first submission matching one of the following criteria:

- the submission with the most recent submitted scorecard (i.e. manual marks) associated with it, regardless of time (i.e. may be late)

- the latest submission that passes the tests

- the latest submission

The primary implication of this is that the last submitted scorecard determines the submission that counts for marks export. So if you mark an earlier submission, that becomes the canonical submission for the dashboard and the marks export, and this scorecard the one that you see in the red view and the student will see when the marks are released. Even if you're not using rubrics for marking, you can use this to 'select' a different submission for the export by creating a fake rubric.

If you choose 'no' for 'show late submissions', then the logic changes to add an 'on time' criterion for steps 2 and 3. However, late submissions that have the latest rubric scorecard will still be shown.

Marker view

This view is accessible via the marking dashboard (which will link to particular submissions as described above) or via the tutor dashboard (which will show their latest code by default, unless you load another submission). Any time you interact with the scorecard, this is saved, even if it's not submitted.

This view is accessible via the marking dashboard (which will link to particular submissions as described above) or via the tutor dashboard (which will show their latest code by default, unless you load another submission). Any time you interact with the scorecard, this is saved, even if it's not submitted.

Unsubmitted scorecards are only visible to the marker that created them. At the moment, once you've started marking, this means that only your unsubmitted marks will be visible to you, even if someone else marks the problem. If you don't want these marks, you can just not worry about this, but if it's important for you to see the current marks in the 'marker view' you can get someone else to create an unsubmitted scorecard (by making any minor edit), submit your 'bad' scorecard, then get them to submit the right scorecard. Or just email us and we'll fix it up. We're planning to improve this aspect!

All marks are attached to particular submissions. If an autosave has been loaded, then you'll be prevented from marking:

You can choose to load any submission from the submission history and start marking, and the scorecard will be attached to that submission. If there is already a scorecard attached to a different submission, and you start 'modifying' it, you will get a copy of that scorecard attached to the current submission. The old scorecard will remain in the system, however, at the moment there is no way for to recover old scorecards without contacting us.

You can choose to load any submission from the submission history and start marking, and the scorecard will be attached to that submission. If there is already a scorecard attached to a different submission, and you start 'modifying' it, you will get a copy of that scorecard attached to the current submission. The old scorecard will remain in the system, however, at the moment there is no way for to recover old scorecards without contacting us.

As discussed above, marking a particular submission makes it the submission that will be used by the export, overriding the standard behaviour of choosing the latest passing submission (or the latest submission if no passing submission exists).

Once you've marked it, you should end up with something like this:

Note that you can use markdown in comments - as you can in the rubric descriptions and category descriptions - and clicking on a comment will allow you to edit it.

Student view

The Assessment tab (i.e. the student scorecards) are released when the 'Manual marks released at' date passes on the Course Module:

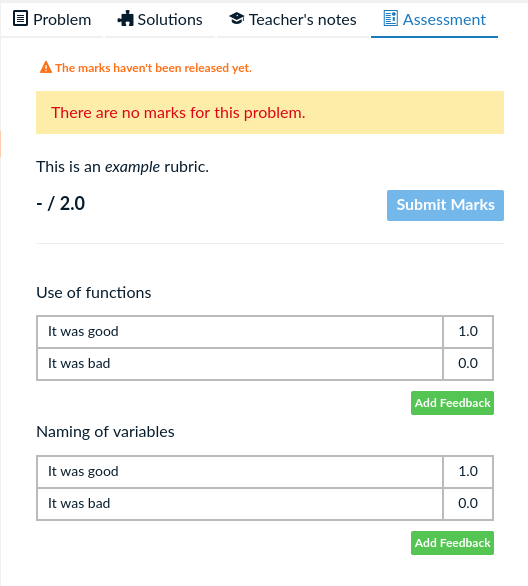

If this is blank, the marks will not be visible. Before the marks are released, students will see the rubric in the assessment tab, but not any of their scores, and there'll be a warning:

If this is blank, the marks will not be visible. Before the marks are released, students will see the rubric in the assessment tab, but not any of their scores, and there'll be a warning:

After the marks are released, they'll see a small red notification dot on the Assessment tab, and see the scorecard in the same way the markers will (but be unable to change it, of course!). If the scorecard applies to something other than their most up-to-date code, which will quite frequently be true because they may have an autosave subsequent to their submission, they'll also see a warning about that:

After the marks are released, they'll see a small red notification dot on the Assessment tab, and see the scorecard in the same way the markers will (but be unable to change it, of course!). If the scorecard applies to something other than their most up-to-date code, which will quite frequently be true because they may have an autosave subsequent to their submission, they'll also see a warning about that:

What the students see here is basically identical to what markers see, aside from not being allowed to modify it.

What the students see here is basically identical to what markers see, aside from not being allowed to modify it.

Exporting manual marks

Model of rubrics and rubric scorecards

This recaps some of the discussion above, but touches a little more on the internal details, and may be useful for markers and course coordinators to understand.

Rubrics are made up of three models: Rubric (attached to a Problem), Categories (attached to a Rubric), and Items (attached to a Category). The total number of points available for a rubric is determined by finding the maximum points available in each non-bonus category, and adding them together. For example:

Here, we have three Categories, each with two Items. The maximum points are capped at 2 because the third Category is a bonus Category. In this example, this means that the total points of 3 is taken down to 2.

Here, we have three Categories, each with two Items. The maximum points are capped at 2 because the third Category is a bonus Category. In this example, this means that the total points of 3 is taken down to 2.

Once you have a valid Rubric attached to a problem that is marked 'visible', you can then create Scorecards through the marker view (see above). A scorecard consists of a Scorecard and a number of ScorecardItems, which link to one (and only one) item in a category. Each category may also have a ScorecardComment. Once there is a ScorecardItem for every category, the Scorecard can be submitted (and is then visible to people other than the marker and used for marks export). A submitted Scorecard and its Comments and Items cannot be changed in the database, but if anyone attempts to change it (either the creator or another marker) a new unsubmitted scorecard will be created that is a copy of that scorecard. If that changed Scorecard is submitted, the old Scorecard will remain in the database, but at the moment is not usually visible to the user. The Scorecard with the latest timestamp is the one that 'wins'.

Internal note: every 'click' on an item results in a new ScorecardItem, and old ScorecardItems are _not_ removed, we simply only use the latest item when we display them to the user. It would make more sense to just do an upsert on the item, but this dates from a design which didn't have the 'submit' a scorecard behaviour. This is similar to the way we persist all Scorecards but display the latest for a problem, except if a particular submission is linked to (via #submission-<id>), in which case we'll load the latest scorecard for that submission.